Francesco Testa, founder of archviz and creative studio Prompt, gives his thoughts on the past, present, and future of AI and what it means for artists.

Prompt’s incredible visualizations, animations, and 360-degree tours bring tomorrow to life — and it’s also heavily invested in using future technology. The studio currently renders in V-Ray and Corona. It also uses digital people from AXYZ, which recently became part of the Chaos family, but Prompt is increasingly augmenting its workflows and output with AI.

Intrigued by Prompt’s experiments with the Stable Diffusion AI system, AXYZ director Diego Gadler spoke to founder Francesco Testa about where artificial intelligence engines have come from, and the fascinating new worlds Prompt is taking them to.

To read Francesco’s thoughts on AI and see how Prompt has put it into practice, check out the full interview on AXYZ’s blog.

Diego Gadler: Have you conducted specific tests using AI technologies?

Francesco Testa: Yes, primarily driven by curiosity and the need to understand how these new tools could assist us in our work, we began using Dall-e almost immediately to create reconstructions of parts of cities in still images. This was a task that would have taken us much more time in Photoshop. Shortly afterward, Midjourney emerged, and we started using it to make initial visualizations of our clients' ideas. These visuals helped them guide us on the direction each image should take.

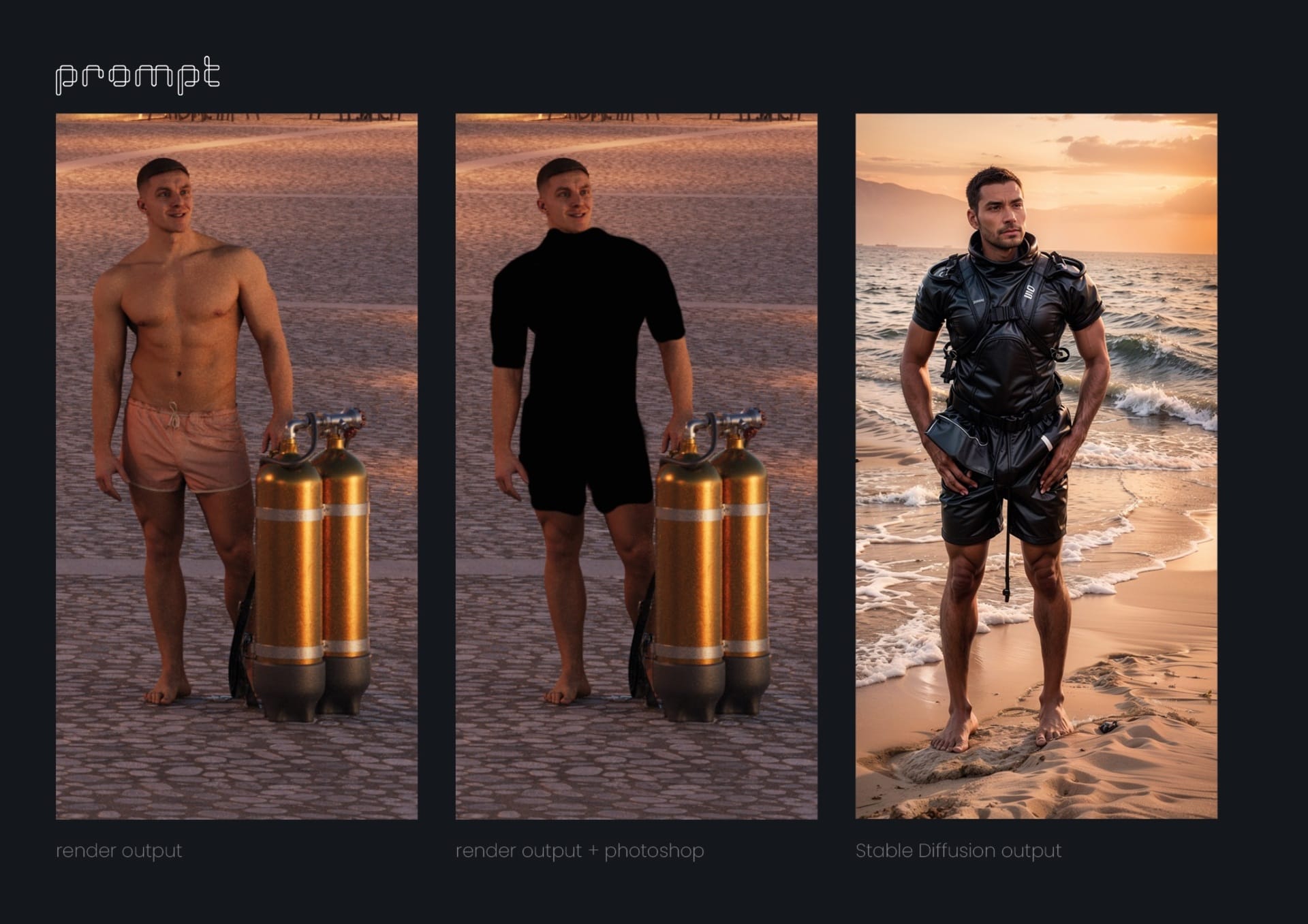

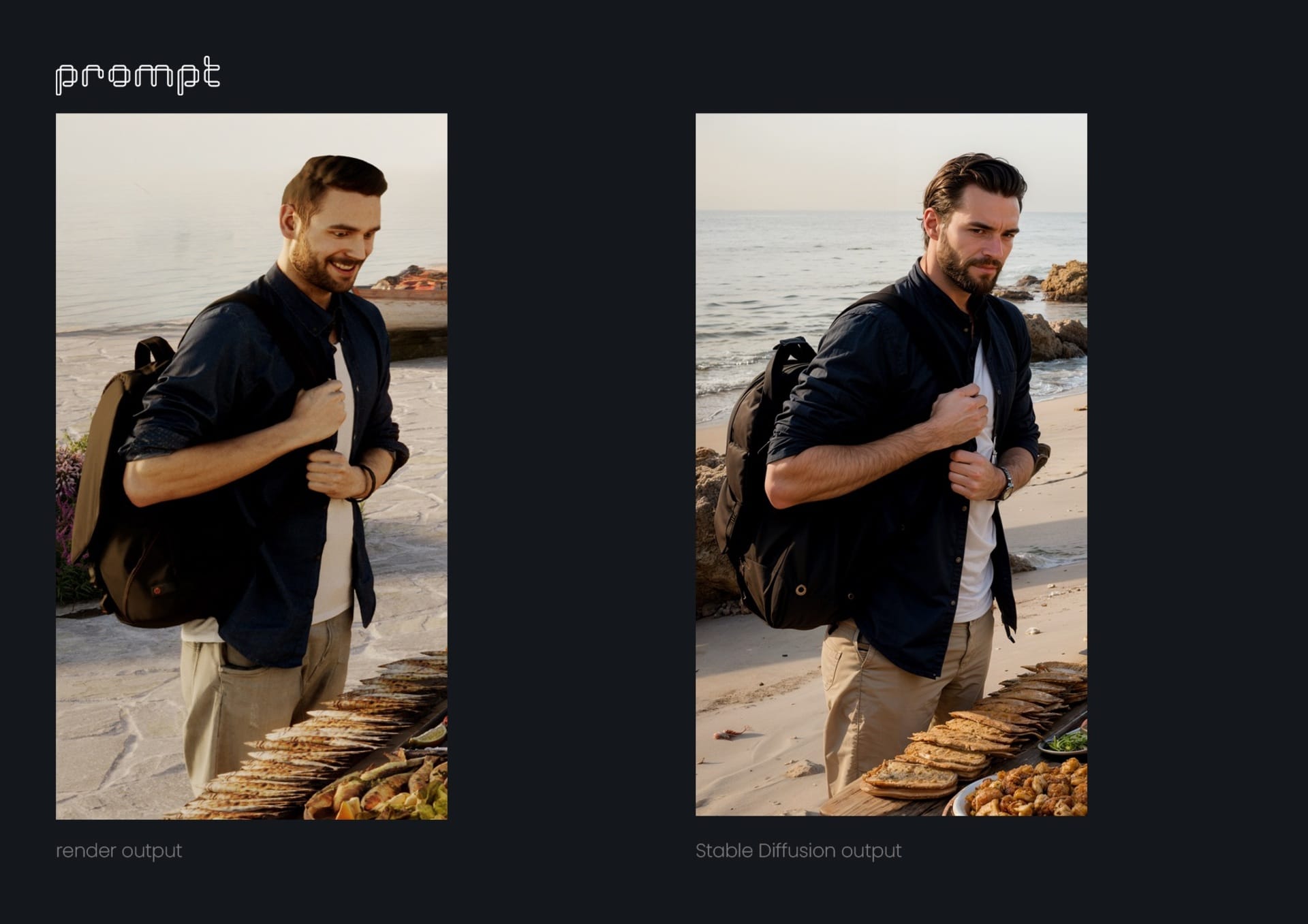

We also began developing short introductions to animations for some projects in Midjourney using still images, which we later animated in After Effects. In parallel, we continued to learn how to use, through trial and error, the tool that is undoubtedly the most powerful for our type of work: Stable Diffusion. With it, we transitioned from animation experiments to making complex corrections in still images, improving textures, adding organic details to the images, and even creating even more realistic characters based on Anima's assets. We also used voice generation models to create initial animation drafts with voiceovers, allowing us to refine them to perfection with the client and scriptwriter before hiring a professional voice actor.

DG: What kind of results have you obtained so far? Are these purely experimental results, or have you had the opportunity to apply them in specific projects or services?

FT: We've been pleasantly surprised by how quickly we've been able to integrate these AI tools into real projects. We all know that in our industry, we often work with tight deadlines, and there's not much room for error, let alone experimentation. This is where the significance of these new tools lies, as they streamline processes and provide us with ideas and solutions that we might never have considered before, all at an impressive speed.

DG: What is your vision for the future? How do you believe these tools are transforming or will transform the work of an artist if they haven't already?

FT: We firmly believe that artists should not feel threatened by this technology. Their role, not as craftsmen but as artists, should be to create beyond the tools, to discern which of the 100 images generated in minutes is the right one, the one that works with the others. Artists should have the ability to embrace quantity; quantity has become just another element of the creative process.

In a way, AI is democratizing a large part of the artistic world that was previously reserved for major productions with actors, sets, cameras, etc. Now, with a decent graphics card, imagination, and ingenuity, a single person can achieve good results. And this is just the ability to reproduce/imitate an existing form of language. The real challenge for artists is to find a completely genuine new language for AI. This should be our only concern.

DG: Looking ahead to the future, how do you envision the evolution of the work you are currently engaged in?

FT: Presently, remarkable strides have been made in static image generation. However, the significant challenge lies in animation. Up to this point, the intrinsic operation of various AI systems involves generating one frame at a time, which is interpreted as independent. This can result in a certain instability or lack of consistency between frames, causing the entire image to appear somewhat "shaky" during playback.

It's likely that this instability will be resolved over time. However, as mentioned earlier, the most intriguing prospect is the development of a visual language that doesn't merely mimic what we already do but instead embraces these visual inconsistencies and instabilities, viewing them not as errors but as virtues. Our near-future aspirations are rooted in the pursuit of this new mode of expression.

Check out the AXYZ blog for the full interview with Francesco.